Mathematics of Types

This post is my understanding of a paper by Conor McBride titled The Derivative of a Regular Type is its Type of One-Hole Contexts. But before we get to calculus, let's start with something simpler, like algebra.

I will use Scala for this post because it's a beautiful and concise language with amazing support for algebraic data types. But many modern languages also have good support ADTs, pick your poison.

Algebra

To talk about how types can even have algebras, let's first understand what an algebra even is. An algebra is something that has

Symbols (Things)

- In your school algebra you had concrete symbols like , , or generic symbols (or variables) like , etc.

- In the algebra of types our symbols are types, such as

Unit,Int,Boolean, or generics types, as in parametric polymorphism

Operators (How to make new things)

- In your school algebra these were ways to combine the numbers to make new numbers like , , , . They are not Symbols themselves, but they are symbol constructors.

- In the algebra of types these are ways to combine types to get new types like

List,OptionandEither. They are not types themselves but type constructors.

Laws (Rules that things follow)

- We will get into Laws in a bit, but just for reference, these are rules like or

Symbols

Types can have a 1 to 1 relationship to numbers (an isomorphism), so we can reuse and explore a lot of what we know from school algebra already. How can we assign numbers to types?

One way to do it would be to count the number of values inhabited by that type. We are looking at types as bags of values (I am avoiding the word Set on purpose).

For example, how can we represent ? We need a type that has no members at all, or a so-called Bottom Type. One that you can never actually instantiate. In Scala's type system, it's called Nothing. You could even make your own enum with no members. (Unfortunately, the enum keyword expects at least one variant but you can always make a sealed class with no subclasses.)

sealed abstract class Zero

What about ? A type that is only occupied by a single value, or a so-called Unit Type In Scala there's Unit. This could also be any type that has exactly one element. Like an empty option.

type One = None.type

Or an empty list.

type One = Nil.type

What about ? A type that is either something or something else, doesn't really matter what those things are, the important part is that there are 2 of them. An obvious choice is Boolean. How about ? I can't think of a type in the base language but you can always make your own!

enum Color:

case Red, Green, Blue

So the idea is that types are bags that contain some countable number of values and you can think of that count as their counterpart in school algebra.

Operations: Addition

Let's explore what operations look like for types. A simple one is the addition operator. For instance, let's explore what would look like in the land of types. We already established that is Boolean. A bag that holds . And is Color. A bag that holds . We also know . How can we combine Boolean and Color to a bag that has 5 variants? Namely . With an enum of course!

enum Five:

case Two(boolean: Boolean)

case Three(color: Color)

You can just add arbitrary numbers and you can also just add arbitrary types. This is why enums are also called sum types.

enum +[A, B]:

case Left(a: A)

case Right(b: B)

type Five = Boolean + Color

Actually, many languages have a built-in type constructor for addition, like Scala's Either or Rust's Result, commonly used for error handling.

type +[A, B] = Either[A, B]

type Five = Boolean + Color

Operations: Multiplication

The next operator to explore is multiplication, we will now try to represent to try to find a way to combine Boolean and Color to create a new type with variants. You can do this by creating pairs of these values.

How can we represent this in code? With something like a struct or a class.

case class ×[A, B](a: A, b: B)

type Six = Boolean × Color

Again, many languages also support tuples which let you multiply as many types as you want. This is why classes and tuples are called product types.

Operations: Exponentials

An interpretation of exponentials is to represent them as enumerating choices. When writing code, you choose what to return based on an input. So in some sense exponentials are functions. For a signature Color => Bool, let's enumerate all the choices you can make on how to implement it.

type Choice = Color => Bool

val allTrue: Choice = _ => true // 1

val allFalse: Choice = _ => false // 2

val isRed: Choice = _ == Color.Red // 3

val isGreen: Choice = _ == Color.Green // 4

val isBlue: Choice = _ == Color.Blue // 5

val notRed: Choice = _ != Color.Red // 6

val notGreen: Choice = _ != Color.Green // 7

val notBlue: Choice = _ != Color.Blue // 8

There are 8 ways to implement this. For any function A => B you have B different possible return values for each value of A so you can think of it as .

type Exp[Base, Exponent] = Exponent => Base

Laws

Armed with what we learned so far we can now try to interpret some basic laws in algebra, with types. We can then observe that Algebraic Laws translate into refactoring transformations that we can apply to our code.

Let's take a simple law and observe how it translates to Scala.

Either[Nothing, X] ≈ X

To translate this into words, we know at compile-time that this Either can never be occupied by a Left variant. That would imply that you managed to instantiate a value of type Nothing, which we KNOW is empty and has no inhabitants. Either[Nothing, X] is ALWAYS some Right(x). So you could just treat it as an X. It is isomorphic to it. Let's look at another one.

(Unit, X) ≈ X

Tupling any X with Unit, does not add any information to X. You can always tuple X with Unit and strip that Unit back again. So it is isomorphic X. Let's look at something slightly less trivial and slightly more useful.

X => (A, B) ≈ (X => A, X => B)

An exponential of a product is equivalent to the product of each component exponentiated. This translates into code that any function returning a tuple can be unzipped into a tuple of two functions.

Lists

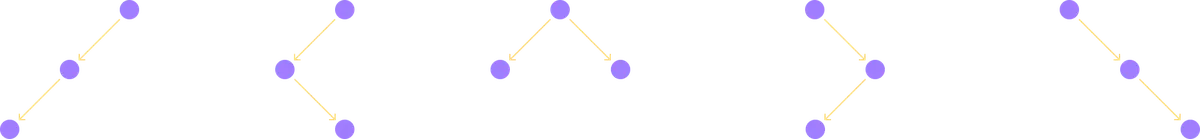

We are now going to try to represent a recursive type. Singly linked lists are implemented in many functional languages as cons lists. With a representation similar to the following:

enum List[A]:

case Nil

case Cons(head: A, tail: List[A])

Let's try to represent this algebraically.

This definition is recursive because List[A] is defined in terms of List[A]. We can use this definition to substitute the List[A] on the right-hand side. Doing this once yields:

We can do this again!

Doing this substitution infinitely many times yields:

It turns out singly linked lists are just the geometric series!

You can justify this to yourself this way, a List[A] is one of

- Empty ()

- A single element of type

A() - Two elements of type

A() - Three elements of type

A() - Four elements of type

A() - ...

Now, I am going to abuse our mathematical notation a bit. Let's go back to our original definition of cons lists.

We can find the value of List[A] by isolating all the List[A]s on the left-hand side of the equation. Let's ignore for now that we don't even know what subtracting two types means.

We can now factor out List[A] from the left-hand side of this equality.

Now we can isolate List[A] on the left-hand side by dividing both sides by . Of course for now we just ignore that we don't know what this operation really means for types.

You might have already noticed, that this is the closed form of the geometric series (under specific bounds, but we are playing incredibly fast and loose with both mathematics and type theory, for fun and profit).

Here is the fun part. We can actually take the Taylor expansion of this expression to regain our original definition of lists!

First, let's recall that the Taylor expansion around the point for a function can be represented as

Also, notice that

Around the point this simplifies to

Plugging this back in, we get

The fact that we can use Taylor expansions will later help us turn seemingly meaningless expressions, into ones that are meaningful in the landscape of types. If you want to read a bit more about negative and fractional types this paper could be a good read.

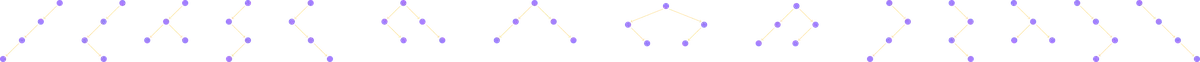

Trees

Another fundamental recursive type is the binary tree, so let's look at those next. One representation of a binary tree is the following:

enum Tree[A]:

case Leaf

case Branch(left: Tree[A], value: A, right: Tree[A])

I hope by now you can see that this can be represented algebraically as:

This is in fact a quadratic equation! And you hopefully remember the formula we can use to solve these kinds of equations:

Plugging that in we get:

That is absolutely horrifying. But fret not, remember that Taylor expansions can save us. Going through the motions we can actually derive the infinite series form of a binary tree as:

We have discovered a way to count the number of possible binary trees of any size!

- The number of empty binary trees is

- The number of binary trees with a single element is also

- There are binary trees of size

- There are different binary trees of size

- There are different binary trees of size

By the way, these particular coefficients show up a lot in counting problems, they are called Catalan numbers if you want to read more about them!

Zippers

Let us take a small detour from Algebras to talk about a very useful family of data structures called Zippers. To motivate them, let's assume that you are building a text editor. And of course, you are doing it in a purely functional way! A document is usually built up of many lines. Each line is a sequence of characters and you have decided to represent each line as a singly linked list List[Char]. Your cursor can go anywhere in that line and you want to be able to quickly jump between characters with your cursor and edit the text. By quickly I mean you want to do it in time complexity. Zippers are exactly the data structure you need. Let's assume that your line has the following text:

the quick brown fox ju[m]ps over the lazy dog.

Your cursor (the focus element) is on the letter m in the word jumps. The requirements are that you need access to the letters immediately neighboring the focus element. In this case, you need to quickly switch the focus to u on the left side (j[u]mps) or p on the right side (jum[p]s). What kind of data structure would give you this desired time complexity?

Let's separate the line into different segments. The left segment, the focus element, and the right segment.

Consider for now only the right segment. If the right segment is a singly linked list of all the characters to the right of the focus element, then we can, in time, decompose the right segment into a head and a tail.

The head (p) is now the new focus element, and the tail (s over the lazy dog.) is the new right segment. So far so good. However, we now need to append the old focus element to the end of the old left segment. This is bad in the current setup because appending to the end of a singly linked list takes time.

But all is not lost, what if we kept the left segment in reverse order instead?

Now after peeling off p we can prepend the m to the beginning of the left segment in time to get:

Conversely, if you wanted to move the cursor to the left, you can decompose the left segment into a head (u) and a tail (j xof nworb kciuq eht). Now the head is the new focus element, and the tail is the new left segment. The old focus element m can now be prepended to the right segment in time to give you:

They are called Zippers because you can imagine the focus element as the zipper-pull on your jacket's zipper. It can zip up and down and focus on the right "tooth".

In your cool new text editor, you can have two zippers, one for the cursor in the current line and another for the current line in the document!

Don't forget that this is only the zipper for a singly linked list, any immutable data structure can have its own zipper, and that zipper will let you focus on an element and edit that immutable data structure efficiently. So far then, we know, that a zipper for a List[A], is an A, alongside two List[A]s for the left and right segment.

Zipper[List[A]] = (List[A], A, List[A])

One-Hole Context

A one-hole context is the same thing as a zipper, except that there's a hole in place of the focus element!

So the one-hole context of a List[A] is two List[A]s (the left segment and the right segment), forgetting the current focus.

Zipper[List[A]] = (List[A], A, List[A])

OneHoleContext[List[A]] = (List[A], List[A])

Now, as an exercise let's examine the one-hole context of tuples of increasingly bigger sizes. We will do this by selecting a focus element and looking at the "leftovers".

First a Tuple1 or a single element. In this case, you can only focus on that single element. What is left around it is Unit (If this doesn't make sense, remember you can always Tuple anything with Unit because ).

Let's go one size up, what about a tuple with 2 elements like (A, A) or You can either focus on the left A then your leftover is the right A, or you can focus on the right A and your leftover is the left A. So it is .

Now let's try a tuple of 3 As like (A, A, A) or . Similarly, you can select any of the 3 As to be the hole and you are left with 2 other As. So the one-hole context is .

Do you notice something funky? The one-hole context seems to be the derivative of the type! The one and the same derivative you learned in high school. As a side note, I find it hilarious that in the paper Conor writes this absolutely unhinged sentence:

As I wrote down the rules corresponding to the empty type, the unit type, sums, and products, I was astonished to find that Leibniz had beaten me to them by several centuries: they were exactly the rules of differentiation I had learned as a child (???).

Anyway, let's confirm this by checking a List[A].

Recall from previous sections that

Taking a derivative of both sides yields:

The derivative of is or two lists. Just as we discovered earlier.

This is super useful! Because remember that we can turn any one-hole context into a zipper by adding a single focus element. And those zippers are very useful data structures that allow us to efficiently traverse immutable data types.

The connection between zippers and derivatives isn't just a mathematical coincidence; it reflects a deep similarity in what these concepts measure.

In calculus, a derivative measures how a function changes at a particular point. If you zoom in close enough to a curve, the derivative tells you about the local structure around that point.

Similarly, a zipper gives you the local structure around a particular position in your data structure. Just as the derivative of a function tells you how to move slightly up or down the curve, a zipper tells you how to move slightly left or right in your data structure.

Let's make this even more concrete. When you take the derivative of , you get . This tells you that at any point , the local rate of change depends on twice your current position. Similarly, when you take the derivative of a binary tree (which we'll see later), you get a structure that tells you about the two possible directions (left or right) you can move from your current position, along with the context needed to reconstruct the tree after moving.

In both cases, the derivative captures the essential information about local movement.

Traversing trees

Let's use what we learned to find a zipper for a binary tree! Recall that:

Taking a derivative from both sides and applying the chain rule yields:

Collecting the derivatives on one side and dividing things out gives you the final derivative:

It does look scary but don't forget we know that is just . We also know that is just Boolean. So simplifying out we get:

OneHoleContext[Tree[A]] = (Tree[A], Tree[A], List[(A, Boolean, Tree[A])])

Let's try to make sense of this to ourselves. Imagine you are sitting in a binary tree. You are sitting in the hole, so forget the element that you are sitting on. If you look "down", you can see your left child and your right child, each of which are themselves binary trees. So far we have justified as what you have underneath you.

Now look above you, you have traversed a path to get here. At each crossroad in that path, you made a binary decision to either go left or right, that's the Boolean! When you went in one direction, you visited an element of type A and ignored another subtree on the other side that you did not visit! So your path can be described as a list of .

Now let's try to implement zipUp and zipDown based on this intuition.

First, we define some types:

enum Tree[+A]:

case Leaf

case Branch(left: Tree[A], value: A, right: Tree[A])

enum Direction:

case Left, Right

type TreeCtx[A] = (Tree[A], Tree[A], List[(A, Direction, Tree[A])])

When zipping up, we need to peel off the head of the path we took. The head includes an element, a direction, and another tree. The tail is the new path. The element is our new focus element! Now we need two trees for our left and right child. One of those is the leftover tree we peeled off from the path. The other we have to create ourselves, by adding the old focus element to the old left and right subtree. Based on the direction we peeled off we can get a new binary tree!

def zipUp[A](focus: A, context: TreeCtx[A]): Option[(A, TreeCtx[A])] =

val (left, right, path) = context

val current = Tree.Branch(left, focus, right)

path match

case (value, dir, otherTree) :: leftover =>

dir match

case Direction.Left => Some((value, (current, otherTree, leftover)))

case Direction.Right => Some((value, (otherTree, current, leftover)))

case Nil => None

Zipping down is even easier! We have a choice to go left or right. We pick a direction and choose the corresponding subtree from our context, and then we generate the new context by pretending the old focus, the direction, and the ignored subtree to our path!

def zipLeft[A](focus: A, context: TreeCtx[A]): Option[(A, TreeCtx[A])] =

val (left, right, path) = context

left match

case Tree.Leaf => None

case Tree.Branch(newLeft, newFocus, newRight) =>

Some(

(

newFocus,

(newLeft, newRight, (focus, Direction.Left, right) :: path)

)

)

def zipRight[A](focus: A, context: TreeCtx[A]): Option[(A, TreeCtx[A])] =

val (left, right, path) = context

right match

case Tree.Leaf => None

case Tree.Branch(newLeft, newFocus, newRight) =>

Some(

(

newFocus,

(newLeft, newRight, (focus, Direction.Right, left) :: path)

)

)

Final remarks

We learned about algebras, zippers, one hole contexts, and we learned how to use highschool math to come up with data structures that are actually useful in your day-to-day programming. This is super neat in my opinion. I'll leave you with a remark from the original paper (which you should read) from Conor McBride.

One only has to open one’s old school textbooks almost at random and ask "What does this mean for datatypes?"